Monitor progression

Monitor progression

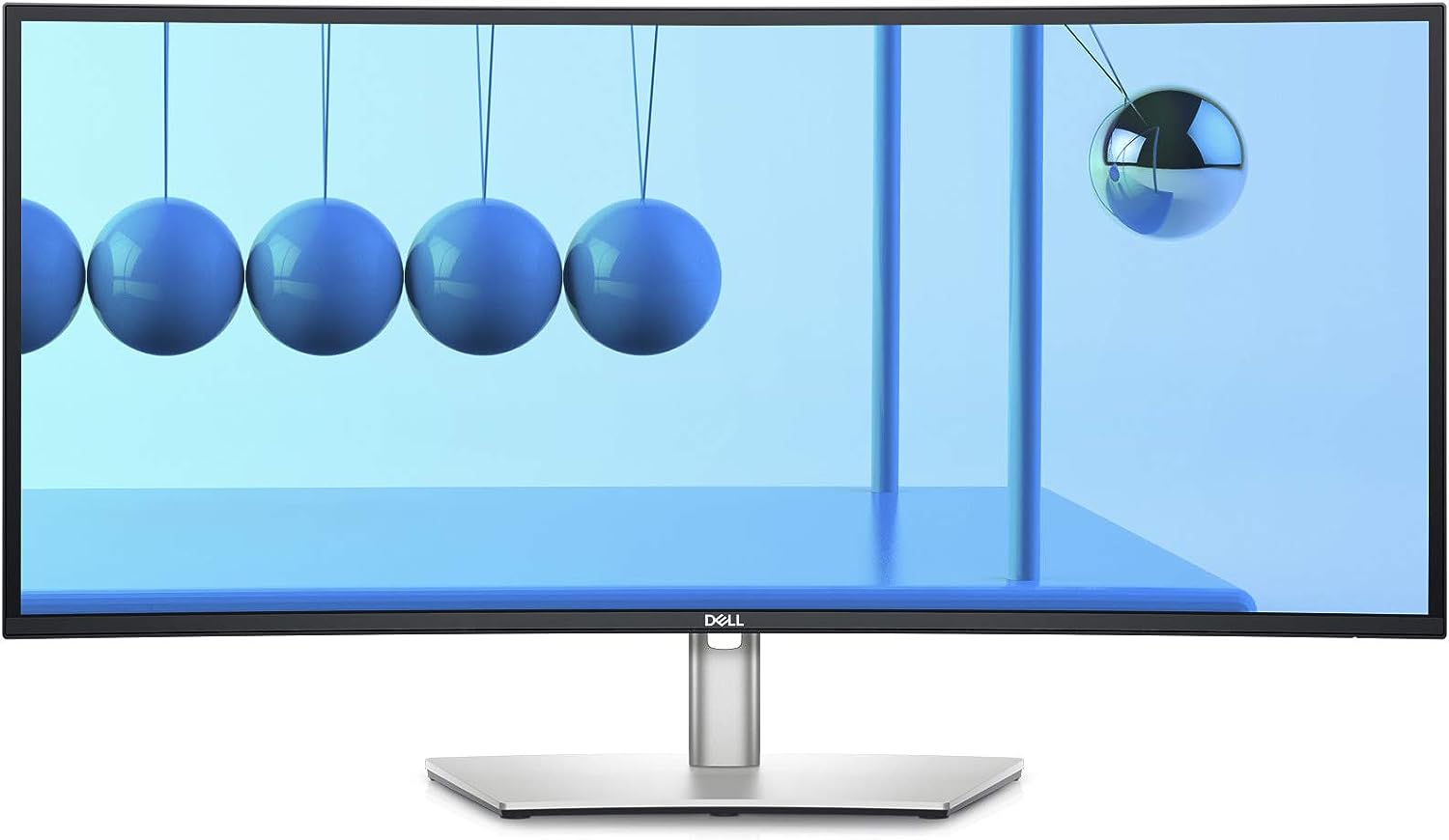

Ultra wide monitor

For years was using a 34” UWQHD monitor from LC-Power, LC-M34-UWQHD-100-C. It was a cheap monitor, but it worked, and it was very wide.

While really getting used to that horizontal space I also was not really happy with the overall quality (picture and build) so one day in 2021 I decided that I need to upgrade my monitor.

At that time I had my 13” MacBook Pro and while the resolution of the monitor was OK it was not really ideal for macOS. I had to use the 100% size mode which is a bit too tiny for my taste. But the next natively supported resolution was just way too big.

There were some tools out there that tricked macOS into rendering a too big picture and then scaling it down to the resolution of the monitor, but those tools need to create a separate virtual screen buffer consumed quite a big portion of my RAM, so I stayed with that 100% scaling.

I used a CalDigit docking station to only plug one cable into my MacBook and have all the periphery connected which I really got used to and didn’t want to miss in any new setup.

Then I discovered the Dell lineup of monitors and saw an Ultra Wide monitor exactly the size of the LC-Power (34”, same resolution, DELL U3421WE) but with much better picture and build quality.

The monitor also said that it has a docking station built in meaning I could get rid of that CalDigit as well, so I ordered one to test.

Once it arrived, and I set it up I was really happy with the picture quality and the overall build quality. The main issue was that I had to decide - in the on-screen menu - if I want to have a higher resolution / refresh rate or higher USB-C speed 🙁

The second - much more critical - issue was that the built-in network adapter seemed to not be compatible with our network system (AmplifiHD at that time, but that’s another story) meaning the moment I plugged the network cable into the monitor the whole network stopped working a few minutes after. This has caused quite a few complaints from family members until I found the cause of this issue 😅.

Given that I was really happy with the picture quality and because there were no real alternatives (higher DPI) I decided to keep it and live with using the CalDigit Docking station for now.

Read more...